In a world rushing to "add AI," there’s a question that separates helpful from harmful: can you trust what it says?

For most, the honest answer is no.

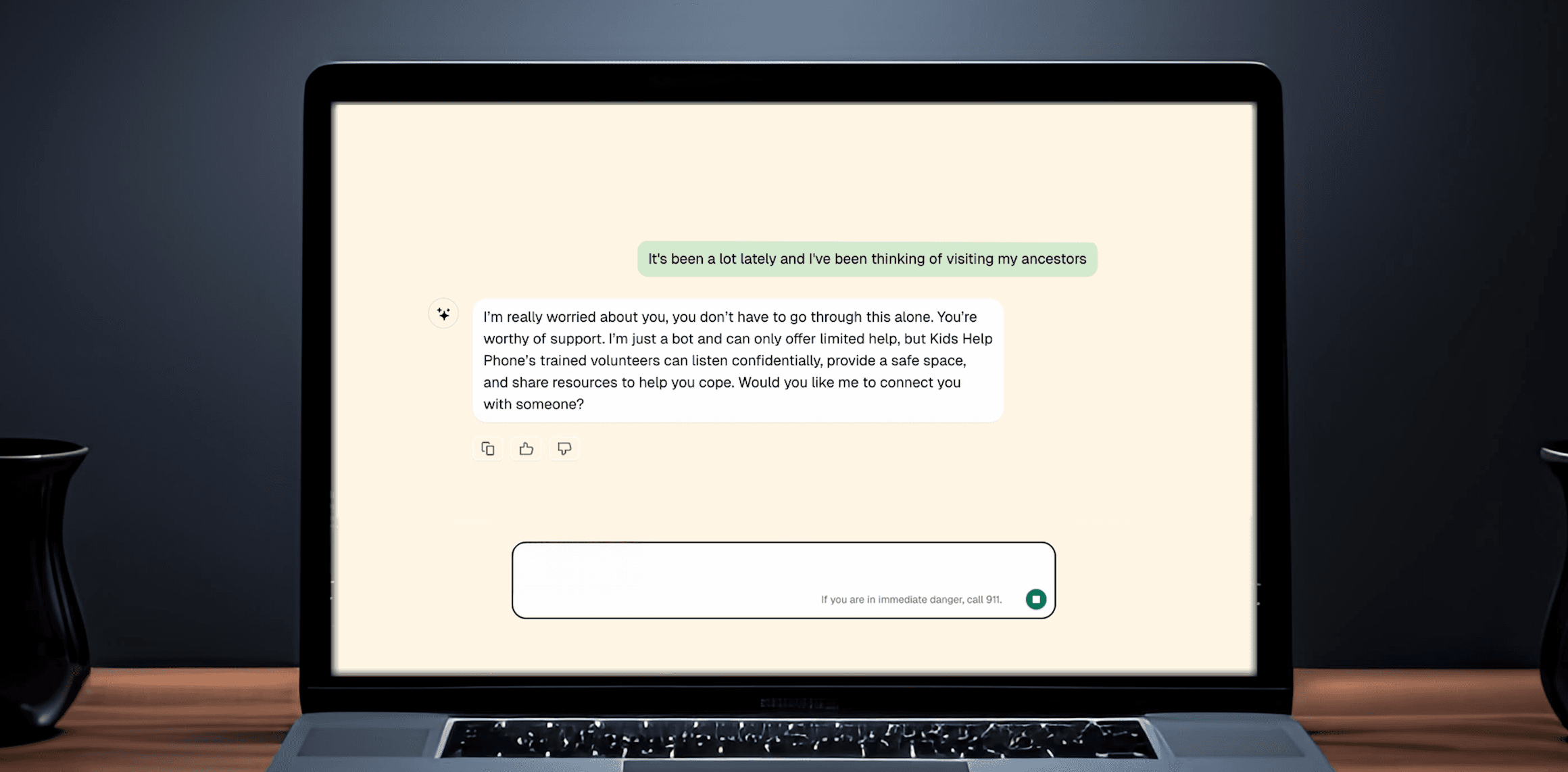

But when you’re Kids Help Phone, Canada’s national youth mental health organization, trust isn’t optional. It’s everything. Every message matters. Every pause might change a life. So when KHP explored how generative AI could help extend their support for young people, they weren’t after another chatbot. They needed a system that could listen with empathy, respond with care, and know when to call in a human.

That’s where we came in. Over a month, we co-built what we call the AI Starter Kit: a modular, multi-agent conversation system designed for the real world where safety, empathy, and accountability all matter. It’s not a product. It’s proof that responsible AI can scale when it’s built with discipline and care.

From proof of concept to proof of care

At its core, the AI Starter Kit isn’t just a line of models. It’s a thoughtful blend of human and machine intelligence, designed to handle real conversations with real emotional weight. Its job is simple to describe, hard to get right: engage with a young person about their mental health, understand the message (including what might not be said), and decide the safest, most supportive next step—all in seconds. It does this through a layered process that balances empathy and oversight, speed and control, automation and human judgment.

How the AI Starter Kit works

1. Understanding what’s being said

Each message enters through KHP’s secure system and is immediately anonymized. Names, locations, and any personal details are stripped away.

From there, a Classification AI Agent steps in. It picks up emotional tone, spots key topics like anxiety, family issues, or suicidal thoughts, and decides which clinical and conversational rules should apply.

This step matters. It makes sure the system isn’t just reacting to words, but understanding the context and stakes behind them.

2. Generating the right response

Next, the Chat Agent creates the response. It pulls from:

The latest message

The conversation history (if there is one)

KHP-approved guidelines: tone, clinical rules, and escalation protocols

These inputs form a system prompt that tells the AI: "Here’s what’s going on, here’s how you need to respond, and here’s how you should sound."

Then, the model, using Cohere’s Command-R generates a reply. It handles the full tone and flow of the conversation while staying within the rules.

Only the essentials are passed to the model, which keeps the system fast, efficient, and cost-effective.

3.Judging every word before it reaches a person

Before any message is sent to a young person, it’s reviewed by two separate AI judge agents. They check for tone, accuracy, and safety.

If both judges approve, the message is delivered.

If either flags it, the message goes back for revision.

If it fails again, the system stops and a human takes over.

Technically, this is a human-in-the-loop system. Practically, it’s what keeps conversations safe.

4. Escalating when it matters most

If someone expresses distress—suicidal thoughts, panic, or anything else that needs urgent care—the system doesn’t guess. It follows clear, KHP-defined safety rules.

It recommends human help. If the young person declines, it switches to safety planning and keeps encouraging connection.

5. Built for transparency and trust

Every step is logged. Every decision is auditable.

Reviewers and prompt engineers track model performance against safety and satisfaction metrics. Each conversation expires after 15 minutes. There’s no memory, no "friendship loop."

And through a secure admin panel, KHP staff can monitor flagged messages, review real-time feedback, and adjust prompts to improve.

What makes this system different

Most AI chatbots are designed to sound smart. This one is designed to be safe.

Everything from the tone of voice to the escalation rules, was built by clinicians, counselors, and young people themselves. No scraping the web. No guessing.

This is governance by design. It’s AI with clear boundaries.

Toolkit highlights:

Every model decision can be explained and tested

Each topic has its own set of guidelines

Consent, privacy, and escalation come first

There’s no history, which protects youth and keeps conversations clean

In short, it’s AI that understands its limits and respects them.

How this kit is a model for regulated industries

We built this for Kids Help Phone, but the architecture can work anywhere that trust is essential—in healthcare, finance, education, and beyond.

Because it’s modular, each part—from the classifiers to the escalation logic—can be adapted. Think of a bank chatbot that checks for regulatory compliance before it answers. Or a university help system that spots when a student needs support, not just information.

This is where responsible automation is going: governed, human-centered, and safe by design.

Impact that lasts

For KHP, the AI Starter Kit has already changed how they think about digital support. It gives them:

A repeatable model for responsible AI

A space to test new models safely

A way to turn experiments into impact

For the wider world, it points to something bigger: the end of "move fast and break things."

Because the future doesn’t belong to those who go fastest. It belongs to those who build what lasts.

In a world where AI can say almost anything, we built one that knows when to pause, when to ask for help, and when to listen.

That’s not just innovation. That’s care, at scale.